Do you have some time for a little π ?Imagine you’re stranded on a desert island and you really need to find a good approximation of π for some super machine that you’re building to make your escape. Perhaps it’s AC powered from a tidal source or wind generator and there are a couple of phase angle calculations you need

Do you have some time for a little π ?

Imagine you’re stranded on a desert island and you really need to find a good approximation of π for some super machine that you’re building to make your escape.

Perhaps it’s AC powered from a tidal source or wind generator and there are a couple of phase angle calculations you need to make for the flux capacitor to power up? Or maybe the temporal calculations for your wormhole require just one more decimal place of π . Suffice it to say that you really need a good approximation to π for things to work just right.

Thing is, you can’t remember π to enough decimal places! If only you’d paid more attention at school, right? Given you have a pencil and some paper, and perhaps a simple electronic calculator (ahem), there may just be a way to get the information you need. Fortunately you also have a good recollection of the Mandelbrot Set and its properties from your time studying chaos and fractals (as you know, a subject quite close to my heart…)

I don’t want to get too much into the maths behind the Mandelbrot Set in this article, (if you want to, take a look here), but what I’d like for you to bear in mind is that its calculation is based upon the concept of prisoners and escapees - something quite relevant to our island scenario.

The core of the computation is based on an equation of the form,

If we calculate the value of this function when z = 0.0 and feed the result back into c we get an infinite sequence of the form:

With the Mandelbrot Set the result as the sequence progresses either gets smaller and smaller or larger and larger and the original point becomes either imprisoned within the set or escapes from the set, hence the common prisoners and escapees analogy.

In fact it quickly becomes apparent that if we plug a number into this feedback equation and the result is <= 2 then the number is in the Mandelbrot Set and if it’s > 2 it’s out of the set. (The number 2 is an arbitrary choice in this case, for us, and we’ll take it just as something that encompasses the whole set for rendering purposes. This time.)

Now, let’s not get into complex numbers too much - we’re stranded on a desert island and it’s hot!

Now, let’s not get into complex numbers too much - we’re stranded on a desert island and it’s hot - let’s just think about the x-axis in this case or the real numbers only.

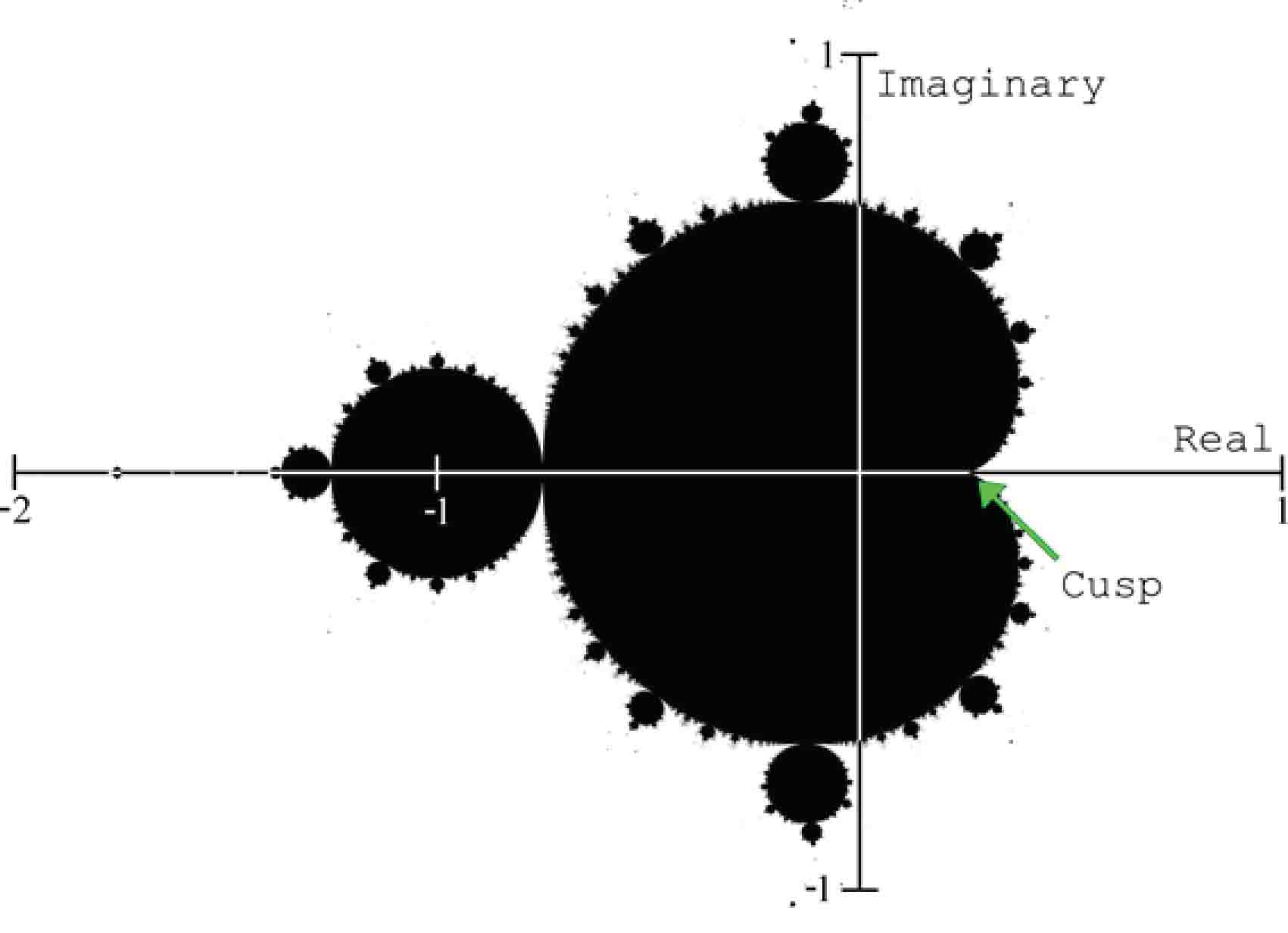

See that bit of the set where it crosses the x-axis, that’s exactly at the value of x = 0.25 and the imaginary part of the related complex number is zero such that anything less than or equal to 0.25 is in the set and anything greater than 0.25 is outside the set. It’s sometimes referred to as the cusp of the Mandelbrot Set.

What we’re interested in (and how people sometimes colour the set) is how many iterations of the feedback equation are necessary for the result to grow greater or less than a specific number (we’re using 2, remember?) In this case we’re interested in the border of the set (which itself is fractal, but again let’s skip some interesting detail for the sake of brevity) and the specific number we’re interested in as a boundary value is the number 2.

Let’s take the cusp x-axis location c = 0.25 as our starting point and explore the numbers just a little bit bigger than c. We know that anything less will be trapped in the set, but how many iterations (let’s call this I(c)) will it take for the result of the feedback equation to grow larger than 2 if we add a very small amount to c? Let’s call this additional amount ε such that we’re investigating c+ε as a starting point.

Let’s choose say 1.0 as a value for ε such that c is now 0.25 + 1.0 = 1.25 (and z always is 0.0):

I(0): 0.02 + 1.25

I(1): 1.252 + 1.25

I(2): 1.56252 + 1.25 = 2.8125 [bang, we’re bigger than 2.0]It took 2 iterations to break out and get larger than 2.0

Let’s choose a smaller number, say ε = 0.5, to get closer to the set so c is now 0.25 + 0.5 = 0.75:

I{0): 0.02 + 0.75

I(1): 0.752 + 0.75 = 1.3125

I(2): 1.31252 + 0.75 = 2.4726 [bang]Again, it took 2 iterations again to break out.

Let’s go smaller, how about ε = 0.3 such that c is not 0.25 + 0.3 = 0.55:

I(0): 0.02 + 0.55

I(1): 0.30252 + 0.55 = 0.8525

I(2): 0.85252 + 0.55 = 1.2767

I(3): 1.27672 + 0.55 = 2.1801… [bang]This time it took 3 iterations…

Let’s make a table of ε (how far we are from c) vs. I(c) (or the iterations needed to escape):

| ε | I(c) |

| 1.0 | 2 |

| 0.1 | 8 |

| 0.01 | 30 |

| 0.001 | 97 |

| 0.0001 | 312 |

| 0.00001 | 991 |

| 0.000001 | 3140 |

| 0.0000001 | 9933 |

| 0.00000001 | 31414 |

| 0.000000001 | 99344 |

| 0.0000000001 | 314157 |

| 0.00000000001 | 993457 |

| 0.000000000001 | 3141625 |

| 0.0000000000001 | 9935818 |

| 0.00000000000001 | 31430913 |

Can you see what’s happening here?

As we get closer and closer to 0.25 by choosing smaller and smaller positive offsets the number of iterations required to exceed 2.0 and escape the set is alternately approximating to the sequence of digits in π.

Even on a desert island with a rudimentary knowledge of the approximate value of π (i.e. at the very least we know it starts with a 3.something) we can insert the decimal point appropriately and realise our answer. But, there’s something else going on here.

Ok, we’re getting alternate approximations to π, but there’s something else, something really subtle. Can you see it? Let’s add another column to the table, √ε * I(n).

(I’ve rounded the extra column to 6 decimal places for brevity.)

| ε | I(n) | √ε * I(n) |

| 1.0 | 2 | 2.000000 |

| 0.1 | 8 | 2.529822 |

| 0.01 | 30 | 3.000000 |

| 0.001 | 97 | 3.067409 |

| 0.0001 | 312 | 3.120000 |

| 0.00001 | 991 | 3.133817 |

| 0.000001 | 3140 | 3.140000 |

| 0.0000001 | 9933 | 3.141090 |

| 0.00000001 | 31414 | 3.141400 |

| 0.000000001 | 99344 | 3.141533 |

| 0.0000000001 | 314157 | 3.141570 |

| 0.00000000001 | 993457 | 3.141587 |

| 0.000000000001 | 3141625 | 3.141625 |

| 0.0000000000001 | 9935818 | 3.141982 |

| 0.00000000000001 | 31430913 | 3.143091 |

It’s just a little bit like magic. It turns out that √ε * I(n) for every row is an approximation to π and it’s getting closer and closer! Also, we don’t even need to insert a decimal point ourselves.

Unfortunately it is a rather computationally intensive exercise, and the most inefficient method of approximating π that I’ve ever come across, but I didn’t promise it was efficient and it’s certainly something you could certainly do on a desert island whilst awaiting rescue if you need to pass the time. A lot of time.

Extra Credit:

- If you have a powerful machine and wish to continue the sequence here’s the Mathematica snippet I was using to calculate the number of iterations:

ostart = 0.25;

e = 0.0000000000000001;

c = ostart + e;

iter2[x_] := x^2 + c;

Length[NestWhileList[iter2, c, # <= 2.0 &]]

As the number of iterations increases so do rounding errors when computing on digital computers (depending on how they’re stored) so there is a limit to how accurate we can go on a particular machine. If you have a better method, drop me a line and let me know - I’d be interested to know how far we can get in a reasonable time.

-

While researching the article (and trying to remember how it worked from when I originally came across this method) I discovered a great video by Dr Holly Krieger that explains a subset of the calculation process in a video on the YouTube Numberphile channel:

Pi and the Mandelbrot Set - Numberphile

https://www.youtube.com/watch?v=d0vY0CKYhPY - There are, in fact, many other ways to approximate π using the Mandelbrot Set, but this is pretty much the simplest and also doesn’t involve complex numbers even though the calculations are pretty intensive.

-

"Pi and the Mandelbrot Set” by A Klebanoff

http://citeseerx.ist.psu.edu/viewdoc/summary?doi=10.1.1.361.1480I’m actually plugging this one additionally as I once had an article in the same journal, though a little earlier - Vol. 2, No. 3, 1994:

http://www.worldscientific.com/doi/abs/10.1142/S0218348X9400051X